Build Bulletproof AI Automation: Input Validation That Prevents Wasted Speed on Bad Outputs

AI automation speeds everything, but bad inputs ruin it. My practical guide to negotiate-validate-execute systems that cut errors and scale reliably for work.

Build Bulletproof AI Automation: Input Validation That Prevents Wasted Speed on Bad Outputs

Picture this: You've invested hours setting up AI automation to supercharge your workflow, only to watch it spit out useless outputs from flawed inputs. In the world of using AI for work, poor input validation wastes speed, skyrockets errors, and kills AI productivity.

By the end, you'll master practical, multi-layered input validation strategies to build bulletproof AI automation systems that scale reliably, slashing errors and unlocking true AI productivity gains.

Why Input Validation is Essential for Reliable AI Automation

AI systems excel at processing data, but they amplify flaws in what you feed them. Garbage in, garbage out holds true here: invalid inputs lead to unreliable outputs that cascade through workflows. Research points to poor data quality as a top reason AI projects fail. That means many initiatives derail before models even get a fair shot.

Without validation, businesses face costly errors, from misguided decisions in finance to faulty recommendations in e-commerce. Robust input validation prevents this by ensuring data integrity upfront. It builds scalability, as clean inputs let AI pipelines handle growing volumes without breaking. Trust grows too, since validated systems deliver consistent results. Regular checks help catch data decay early and maintain accuracy. Implementing this core practice turns fragile automations into reliable engines for AI productivity.

Common Pitfalls: How Bad Inputs Derail AI Productivity

You've likely seen it: an AI workflow chokes on mismatched formats, like dates in MM/DD versus DD/MM, halting everything downstream. Outliers sneak in too, skewing predictions and inflating error rates. Noisy or malicious inputs compound the issue, without multi-layered checks, systems remain exposed.

Edge cases hit hardest in real-world AI automation. A single anomalous transaction can trigger false fraud alerts, or incomplete customer data might resolve only partial queries. These pitfalls waste compute resources and erode confidence. Lax schema enforcement invites unexpected behaviors in APIs feeding AI. The fix starts with recognizing these traps to protect your setups.

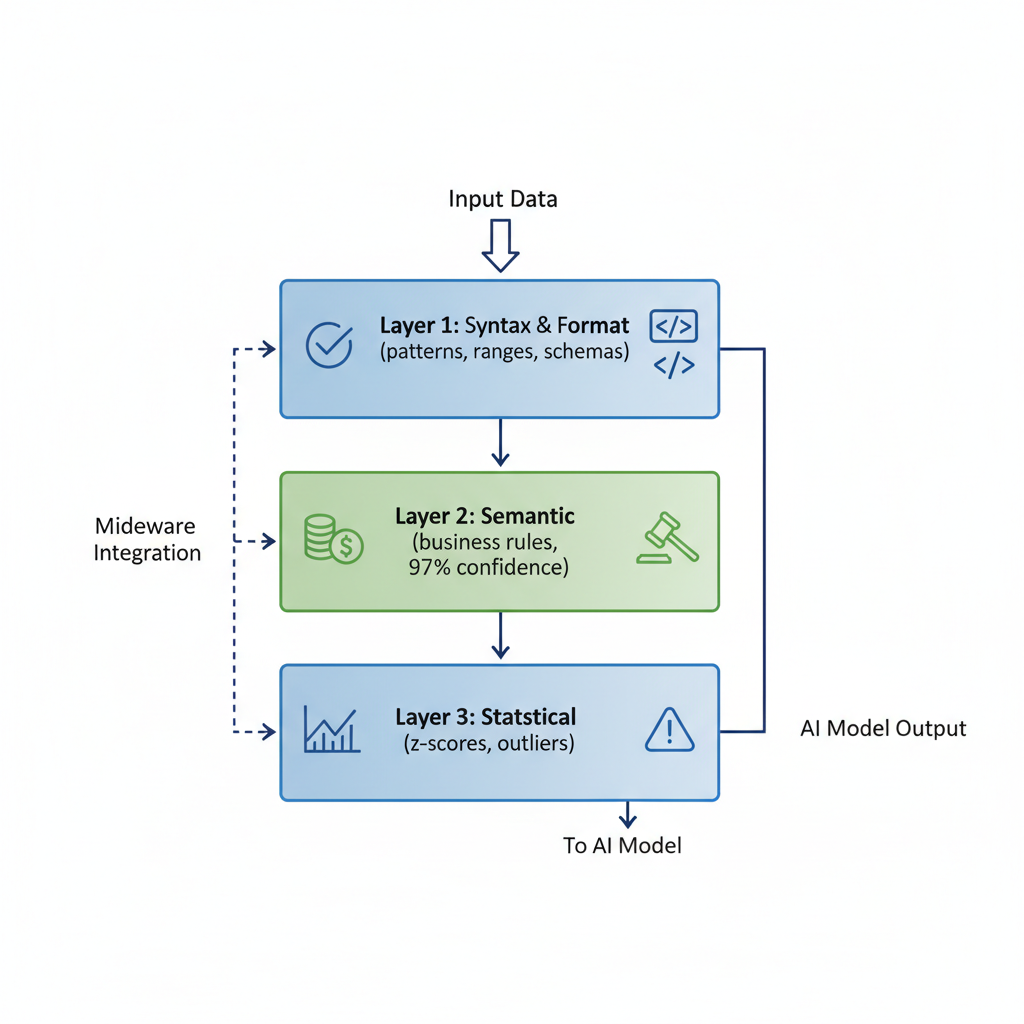

Building Multi-Layered Input Validation for AI Systems

Layer your defenses for comprehensive coverage.

- Layer 1: Syntax and format checks. Verify strings match expected patterns, numbers fall within ranges, and structures align with schemas. Tools like JSON Schema enforce this, reducing risks.

- Layer 2: Semantic validation via business rules. For financial fields, demand high confidence scores. This catches context mismatches, like invalid account details.

- Layer 3: Statistical anomalies. Flag outliers using z-scores or percentiles to block noisy data. Combining these layers, with app-level sanitization plus model guardrails, defends against malicious inputs.

Integrate into AI pipelines via middleware. Route data through validators before models process it. This framework ensures reliability, helping avoid the failure rates linked to data issues.

Top Tools and Frameworks for AI Input Validation

Python users turn to:

- Pydantic for declarative models that validate types and constraints at runtime.

- Great Expectations paired with it for data profiling and expectation suites tailored to AI datasets.

For APIs, JSON Schema defines rigorous structures, blocking non-conformant payloads early. In LLM chains, LangChain's custom validators parse prompts and outputs, enforcing rules mid-flow.

Monitor with Weights & Biases to track validation metrics over time. These tools fit seamlessly into a multi-layered approach. Choose based on your stack, Pydantic shines in app code, while Great Expectations handles batch data.

Step-by-Step Guide to Validate Inputs in Your AI Automation

Assess your pipeline first: map data sources, identify formats, and spot failure points. List rules per layer, syntax for emails, semantics for business logic, stats for anomalies.

Select layers matching your needs. For a payment system, add strict confidence thresholds.

Code it simply in Python with Pydantic:

from pydantic import BaseModel, validator

from typing import List

class PaymentInput(BaseModel):

amount: float

account: str

@validator('amount')

def check_amount(cls, v):

if v < 0 or v > 1000000:

raise ValueError('Invalid amount range')

return v

@validator('account')

def check_confidence(cls, v):

# Simulate confidence check

if not (len(v) == 12 and v.isdigit()):

raise ValueError('Account fails confidence threshold')

return v

# Usage

inputs = [PaymentInput(amount=150.0, account="123456789012")]Test rigorously: feed edge cases, measure pass rates, and iterate. Deploy in pipelines, logging failures for refinement. Regular checks keep it sharp. Think about how this fits your specific AI productivity goals, maybe start with one layer and build from there.

Scaling Validated AI Automation for Enterprise AI Productivity

Embed validation in CI/CD to automate tests on every commit. For high volume, distribute checks across clusters, processing millions of inputs without bottlenecks.

Track metrics like validation pass rate, error reduction, and throughput. Ongoing tweaks yield strong ROI: companies report dramatic cuts in processing time and big jumps in autonomous query handling, leading to serious cost savings.

This scales systems reliably. Robust validation ensures long-term performance, especially as you push AI automation harder in your work.

Real-World Wins: AI Automation Transformed by Input Validation

E-commerce teams have overhauled order processing with validated inputs, slashing times dramatically and boosting autonomous query resolution. Cashier-less stores hit high accuracy in product recognition and cut checkout times substantially, proving validation's edge in retail.

Lessons apply everywhere: layer checks, monitor continuously, and watch productivity soar. You'll see similar gains when you apply these to your setups.

Implement these input validation strategies today to transform your AI automation from fragile to bulletproof. Watch errors vanish, AI productivity soar, and your workflows scale effortlessly. Start validating now, what's stopping you from learning these practical AI skills?

Explore more topics

Related Articles

NotebookLM Gem Setup for Instant Business Process Improvements and Prompts

How I Use Slash Commands for Instant Email Replies to Save 2 Hours Daily in 2026

AI Side Project: Build a Browser Agent That Posts Twitter Threads Autonomously