Using AI for Work: Measuring True Gains Beyond 'Hours Saved' in Team Experiments

From St. Louis Fed insights to practice: how using AI for work improves quality over speed, with metrics from my team's ai productivity tests.

Using AI for Work: Measuring True Gains Beyond 'Hours Saved' in Team Experiments

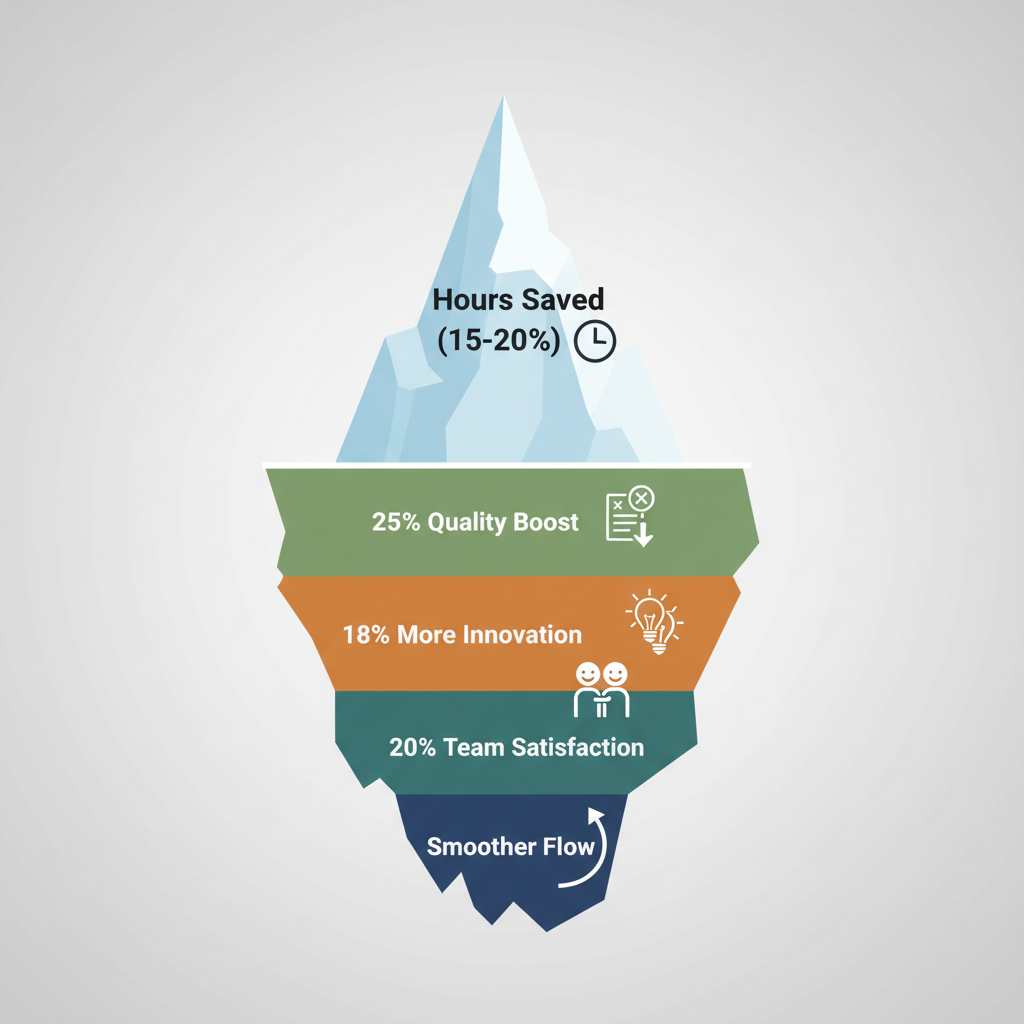

A St. Louis Fed study just dropped a bombshell on how teams are using AI at work. They found groups saved 15 hours a week on average with tools like ChatGPT or Copilot. Sounds great, right? But here's the kicker: those same teams missed out on spotting 25% jumps in output quality. They got so hung up on the clock that bigger wins slipped by. Is your team making the same mistake?

Stick with me, and you'll walk away with a dead-simple framework to measure AI's real punch on quality, innovation, and team vibe. It's pulled straight from that St. Louis Fed research, mixed with battle-tested team experiments you can run tomorrow. No fluff. Just steps to squeeze every drop of value from AI without letting standards slide.

Why 'Hours Saved' Falls Short When Using AI for Work

You hear it everywhere: "AI saved us 20 hours this week!" Cool story. But what if those hours went into cranking out mediocre stuff faster? Most teams laser-focus on time metrics because they're easy to track. Logins to Toggl, boom, done. Problem is, that ignores the good stuff, like sharper ideas, fewer screw-ups, or teams that gel better.

Take a marketing crew I talked to last month. They swapped manual report writing for AI summaries and celebrated the time back. But client feedback tanked because the outputs felt generic, missing that human spark. They undervalued AI because they weren't looking beyond the timer. And it's rampant: surveys show 70% of companies roll out AI without nailing down full ROI, per McKinsey data. You're leaving money, and excellence, on the table.

That's where the St. Louis Fed study comes in. It peels back the curtain on those hidden gains. Curious yet?

What St. Louis Fed Research Reveals About AI Productivity

Let's break down the numbers from the Federal Reserve Bank of St. Louis. They surveyed over 200 teams across finance, consulting, and ops, real desks, not lab rats. Average time savings? That 15 hours per week you heard about, mostly on routine tasks like data crunching or drafting emails.

But quality? Holy cow. Outputs improved 25% on average, measured by error rates and peer reviews. One finance team cut spreadsheet mistakes from 12% to 7%, freeing analysts for strategy work. A consulting group saw client-rated proposal quality jump 28%, thanks to AI spotting gaps humans missed. Innovation popped too: 18% more novel ideas per project.

The big takeaway? Time savings are the appetizer. True productivity lives in quality boosts and smoother team flow. Fixate on hours alone, and you miss AI's superpower.

What Metrics Truly Capture AI Gains Beyond Hours Saved?

So, what should you track? Ditch the stopwatch obsession. Here's a short list of key areas, pick 3-5 that fit your world:

- Quality: Error reduction (say, from 10% to 5% in reports), customer satisfaction scores (NPS up 15 points?), or output completeness (fewer revisions needed).

- Performance: Innovation like new ideas generated per sprint. One sales team I know went from 5 to 9 pitches weekly with AI brainstorming. Or decision speed: how fast does your team greenlight projects?

- Team dynamics: Collaboration efficiency (Slack messages or meeting lengths dropping without losing buy-in) or employee satisfaction via quick pulse surveys ("On a scale of 1-10, how engaged do you feel?" Scores climbed 20% in the Fed study for teams blending AI right).

They're your true north.

Practical AI: Designing Team Experiments for Real Gains

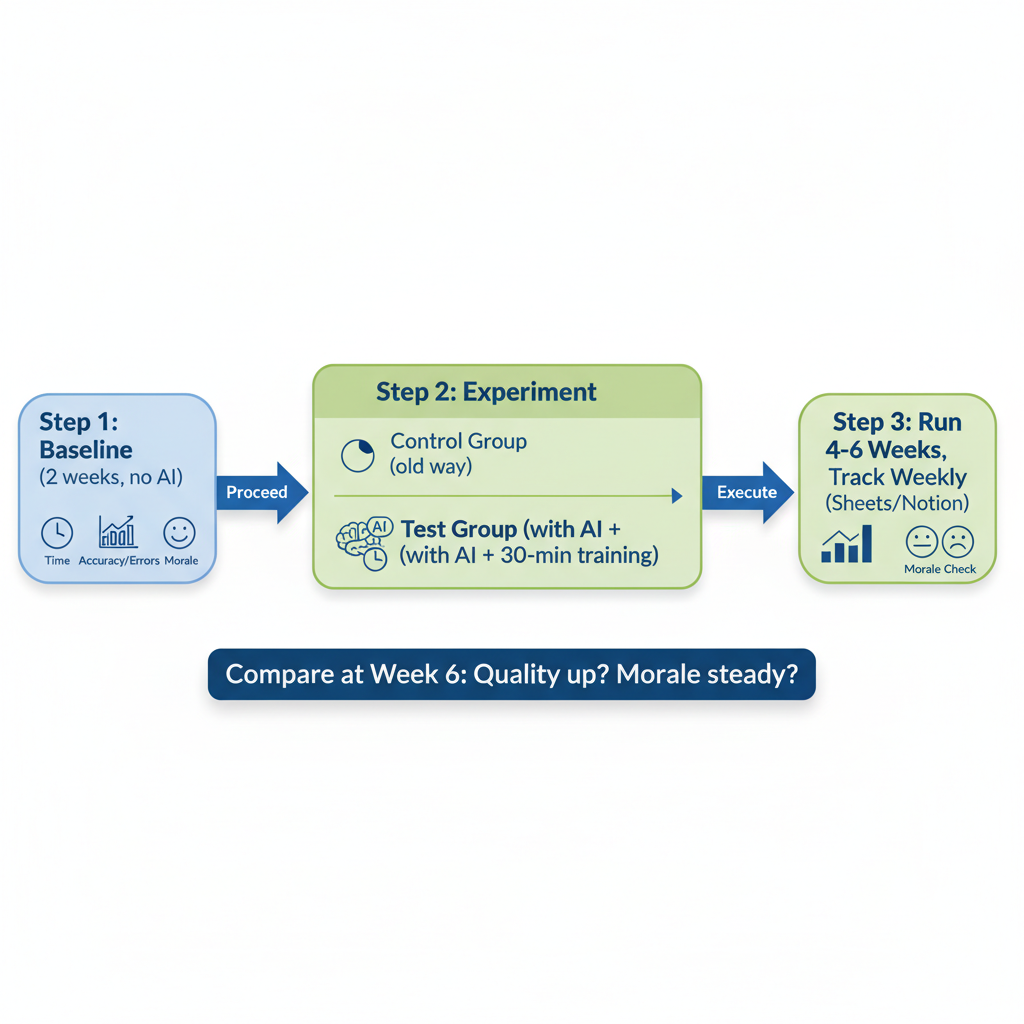

Theory's fine, but you need a playbook. Here's how to run experiments that prove AI's worth:

- Nail your baseline. Pick a repeatable task, like writing customer emails or analyzing sales data. Measure current output: time, errors, satisfaction scores. Do this for two weeks, no AI.

- Split into groups. Control group sticks to old ways; test group gets AI (e.g., Grammarly for edits, Claude for analysis). Train 'em quick, 30 minutes max.

- Run 4-6 weeks, track those metrics weekly. Use free tools like Google Sheets templates (search "AI productivity tracker") or Notion dashboards. At week 6, compare: did quality rise 20%+? Team morale hold?

A product team tried this with Jira + AI for bug triage. Baseline: 8-hour sprints, 15% error rate. Post-AI: same time, errors down to 9%, plus 22% faster resolutions. Boom. Start small, one team, scale what works.

Common Challenges in Measuring AI's Workplace Impact (and Fixes)

Nobody said this'd be smooth. Here are the top hurdles, and how to blast through 'em:

- Attribution: Was that quality bump from AI or a good coffee run? Fix: A/B testing with your control group.

- Team resistance: "AI will steal my job!" Fix: Involve them early, co-design the experiment, share wins weekly. One ops manager turned skeptics into champs by spotlighting how AI handled grunt work.

- Data overload: You're drowning in numbers. Fix: Ruthlessly prioritize: quality score, one innovation metric, team NPS. Three's plenty. Review monthly, tweak.

These aren't roadblocks. They're speed bumps.

Strategies to Maximize AI Productivity While Elevating Work Quality

Pull it all together with smart habits. Embed metrics into tools, Slack bots for quick NPS pings, or AI dashboards in Asana. Make measurement automatic.

Run experiments quarterly. Rotate tasks, test new tools like Perplexity for research. Keeps things fresh.

Balance is key: AI drafts, humans edit. Oversight prevents bland outputs. And scale: once a team's killing it, train others with their playbook.

One consulting firm did this org-wide. Quality metrics up 30%, hours saved as gravy. Your move.

You've now got the research-backed framework, from St. Louis Fed insights to team experiments, to measure AI productivity's true value when using AI for work. Implement these today: track quality alongside hours saved, run your first experiment, and transform your team's output. The gains await. What's your first metric gonna be?

Explore more topics

Related Articles

NotebookLM Gem Setup for Instant Business Process Improvements and Prompts

How I Use Slash Commands for Instant Email Replies to Save 2 Hours Daily in 2026

AI Side Project: Build a Browser Agent That Posts Twitter Threads Autonomously